Today I demonstrated some of the new features of VMware vSAN 7.0 Update 3 (7.0 U3) related to 2-node deployments to one of our customers who uses this topology extensively. We have focused particularly on improvements to the resilience of 2-node clusters and the witness Host. You can find a short video about the features here. An extensive list of the new features and the release notes can be found here and here.

Another new feature I noticed but forgot zu demonstrate to the customer ist the new vSAN cluster shutdown and restart operation. I already tested the shutdown and restart feature with my 4-node cluster but as the demo was finished I decided to give the feature a try with the 2-node cluster and the external witness appliance.

Schlagwort: VCSA

Configure the vCenter Server Login message

As a solution provider, my company often installs VMware vSphere environments for customers who do not administer the solution themselves. In addition to restricting user permissions, we have been working with individual login messages in the vCenter Server for some time now. We usually use the login message to remind the customer that the environment is managed by our company and that any changes must be approved in advance.

This post gives you a short overview on how to configure the login message using the vCenter Server administration interface.

Continue reading „Configure the vCenter Server Login message“Update to ESXi 6.7 U3 using esxcli fails with error „Failed to setup upgrade using esx-update VIB“

After the gerneral availability of ESXi 6.7 U3, I decided it is time to update my homelab. I used the integrated vSphere Update Manager (VUM) for my nested vSAN Cluster without any issues. During my VCAP-DVC Deploy preparation earlier this year I used an old Intel NUC system to deploy an additional vCenter Server Appliance (VCSA) in order to play around with Enhanced Linked Mode (ELM). I wanted to have the VCSAs in different Layer 3 networks, so I copied the design from the nested cluster and installed a nested router alongside with a nested ESXi host. The nested ESXi is used to host the second VCSA. Due to this design I can not use the integrated VUM of the second VCSA to update this standalone ESXi host.

The virtual machines (VMs) on the standalone ESXi host are not very important, so I decided to shut them down together with the VCSA and update the nested ESXi host via CLI. For details on how to update ESXi 6.x using the CLI, take a look at one of my previous blog posts here. I used this method quite some time so I was certain to have no issues with the update. But this time it was different, the update failed.

Set up vCenter HA in vSphere 6.7 U1

Today I was asked by one of my customers to configure vCenter High Availability (VCHA) in his productive vSphere 6.7 U1 environment. Now that it’s been some time since I last configured VCHA, I first tested the process in my homelab. The following procedure shows how to configure and activate this feature.

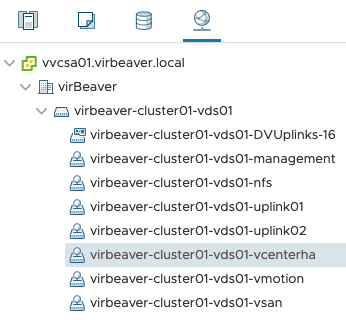

Step 1: Set up the VCHA network

Before the VCHA cluster can be set up two things have to be prepared: A dedicated network for VCHA and IP addresses for the three vCenter nodes (Active, Passive, Witness). Both requirements are displayed to the administrator directly before the configuration of VCHA in the vSphere Client.

Since my homelab is a nested environment, the corresponding network can be set up without difficulty. In a regular customer situation where the ESXi hosts are connected via physical switches, the corresponding VLAN must be created and available on the switch ports before communication can function via the new VCHA network.

Step 2: Choose the VCHA network and deployment type

After clicking on SET UP VCENTER HA you can select the VCHA network for the existing, active vCenter Server Appliance (VCSA) and the deployment type. Because my customer is running only a single site with a single SSO domain I can leave the checkbox Automatically create clones for Passive and Witness nodes checked and let vSphere do the work for me.

Now I only have to select the Location (compute), Networks and Storage resources for the other two nodes (Passive and Witness) to continue with the second configuration step.

Configuring compute, storage and network resources for the Passive and Witness nodes

Step 3: Configure IP addresses for VCHA

After configuring the basic VM settings, only IP addresses in the VCHA network for the three nodes (Active, Passive and Witness) have to be assigned. With some support from the customer this should not be a problem either. Since the VCHA network is a private network that does not need to be routed, I do not configure the default gateway.

Step 4: Wait for completion

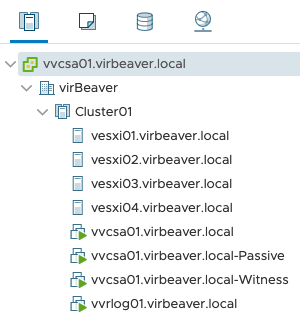

After a click on Finish our work is done and the next steps are performed by vSphere alone. First we create a clone for the Passive Node and configure it according to our specifications. Then the same is done again for the Witness node.

At the end we have two new vCenter VMs (Passive and Witness) in the environment.

In my homelab it took about 15 minutes until the VMs were rolled out and configured.

Also, a DRS rule that distributes the vCenter nodes across different hosts in my environment was automatically created and configured.

Wrap-Up

Since the introduction of vCenter High Availability with vSphere 6.5, the process of rolling out this great feature has become easier and more intuitive. In a relatively simple environment, the high level of automation makes it a matter of minutes to get VCHA up and running. And for more complex environments, manual cloning of the VCSA still remains.