Today I demonstrated some of the new features of VMware vSAN 7.0 Update 3 (7.0 U3) related to 2-node deployments to one of our customers who uses this topology extensively. We have focused particularly on improvements to the resilience of 2-node clusters and the witness Host. You can find a short video about the features here. An extensive list of the new features and the release notes can be found here and here.

Another new feature I noticed but forgot zu demonstrate to the customer ist the new vSAN cluster shutdown and restart operation. I already tested the shutdown and restart feature with my 4-node cluster but as the demo was finished I decided to give the feature a try with the 2-node cluster and the external witness appliance.

Kategorie: VMware

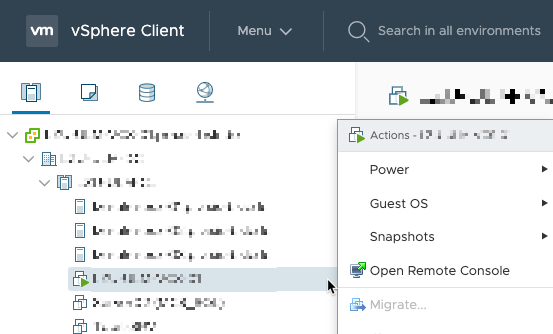

Migration options for a VM are greyed out

Every year, before Christmas, the update window is coming up for some of my customers. One of these customers was due for minor VMware vSphere updates today. Actually not a big deal even if the customer has not activated vSphere Distributed Resource Scheduler (DRS) on his cluster due to missing licenses. As in previous years, the task was to manually evacuate the individual ESXi hosts one by one and then standardize them via the vSphere Update Manager (VUM). At the beginning everything was running without any issues until I wanted to evacuate the vCenter Server Appliance (VCSA) as the last VM of the host. For whatever reason, the migrate function was grayed out in the context menu of the VM.

So far, vMotion has actually never caused any problems in this cluster. So it was once again time for a little round of troubleshooting.

Continue reading „Migration options for a VM are greyed out“NTP settings on host is different from the desired settings

To get some more flexibility in my Homelab I added another domain controller (Active Directory, DNS and DHCP). Unlike my first domain controller, which runs directly on the physical ESXi host (details can be found here), I installed the second domain controller inside the nested vSAN cluster. After configuring all services I wanted to use the new domain controller as an additional DNS server in my VMware vSphere environment. So I quickly adjusted the network and NTP settings of the vCenter Server appliance and the ESXi hosts and then everything should be fine. So far so good. No problem until then. Shortly after I added the additional domain controller in all locations a warning message appeared in my vSphere cluster.

Remove orphaned vCenter Server from SSO domain

After my „little“ homelab outage last year and the delivery of a new SSD I found some time to redeploy the nested cluster quite some time ago. During the preparation to my VCAP-DCV Deploy exam I deployed a second VCSA (vCenter Server Appliance) on my old Intel NUC and joined them to a single SSO domain to learn and try different things in the linked mode setup. That’s the reason why I received the „Could not connect to one or more vCenter Server Systems: https://<vcsaFQDN>:443/sdk“ every time I logged in to the second VCSA. Because I planned to redeploy the nested environment using the same IPs/FQDNs I wanted to make sure the orphaned VCSA is cleanly removed from the SSO configuration. This week one of my customers asked me for help with the same problem.A quick search and I found the following VMware KB article (again): Using the cmsso command to unregister vCenter Server from Single Sign-On (2106736). This time I decided to write a short blog post on the topic.

WeiterlesenUsing vSphere Custom Attributes with Veeam Backup & Replication

Most of my customers now use tag-based backup with Veeam Backup & Replication to protect their business-critical applications and services. This ensures that they no longer need to perform any configuration within the backup software to protect their workloads. Only the individual adjustments of the Guest Credentials for Application-Aware Processing have to be done in the Veeam Console. The added value you have by doing this I have already covered in another blog post.

In the ever accelerating IT world and the changes that come along with it, it is extremely important for my customers to be able to make fast and reliable statements about the data backup status of certain systems. Since we use vSphere tags to perform nearly all of our backup administration from within vCenter, it would be consistent to have the appropriate status information available at this location as well. This is where the Notification Settings within the Advanced Backup Settings come into play. How you can configure simple status updates in vCenter without installing additional plug-ins or tools, and what you should consider when doing so, I‘ ll show you below.

Conflicting VIBs when updating ESXi using Custom Images/Offline Bundles

As every year, some of my customers use the weeks after Christmas to update their environments. Nearly all of them run their ESXi hosts with vendor-specific Custom Images that provide additional drivers or agents over the standard VMware image. Unfortunately there are almost always problems with conflicting VIBs when they are updated. Of course it was the same this time. In my case, this time it was about a custom image from Fujitsu.

To perform the update successfully the problematic VIB must be removed. The necessary steps for this I would like to point out below.

Continue reading „Conflicting VIBs when updating ESXi using Custom Images/Offline Bundles“How to migrate running VMs to different datastores without Storage vMotion

Today I learned something very useful that I want to share with you. We have some smaller customers from the SMB segment. Here we often find small vSphere clusters with two or three ESXi hosts. These are usually licensed with vSphere Essentials Plus to use the benefits of vSphere High Availability (vSphere HA). The functions contained in this bundle are quite sufficient for regular operation. One function that is missing in vSphere Essentials Plus is the ability to move running VMs from one Datastore to another using Storage vMotion. In this post I want to share with you a way on how you can still move your running VMs between datastores.

Continue reading „How to migrate running VMs to different datastores without Storage vMotion“My VMworld 2019 Europe recap

The last days flew by. This week I attended VMworld 2019 Europe. It was my first participation in an event of this size so I decided to write a few lines about it. This post should give you an idea of my personal impression of the venue and everything around it.

Continue reading „My VMworld 2019 Europe recap“Configure the vCenter Server Login message

As a solution provider, my company often installs VMware vSphere environments for customers who do not administer the solution themselves. In addition to restricting user permissions, we have been working with individual login messages in the vCenter Server for some time now. We usually use the login message to remind the customer that the environment is managed by our company and that any changes must be approved in advance.

This post gives you a short overview on how to configure the login message using the vCenter Server administration interface.

Continue reading „Configure the vCenter Server Login message“Update to ESXi 6.7 U3 using esxcli fails with error „Failed to setup upgrade using esx-update VIB“

After the gerneral availability of ESXi 6.7 U3, I decided it is time to update my homelab. I used the integrated vSphere Update Manager (VUM) for my nested vSAN Cluster without any issues. During my VCAP-DVC Deploy preparation earlier this year I used an old Intel NUC system to deploy an additional vCenter Server Appliance (VCSA) in order to play around with Enhanced Linked Mode (ELM). I wanted to have the VCSAs in different Layer 3 networks, so I copied the design from the nested cluster and installed a nested router alongside with a nested ESXi host. The nested ESXi is used to host the second VCSA. Due to this design I can not use the integrated VUM of the second VCSA to update this standalone ESXi host.

The virtual machines (VMs) on the standalone ESXi host are not very important, so I decided to shut them down together with the VCSA and update the nested ESXi host via CLI. For details on how to update ESXi 6.x using the CLI, take a look at one of my previous blog posts here. I used this method quite some time so I was certain to have no issues with the update. But this time it was different, the update failed.